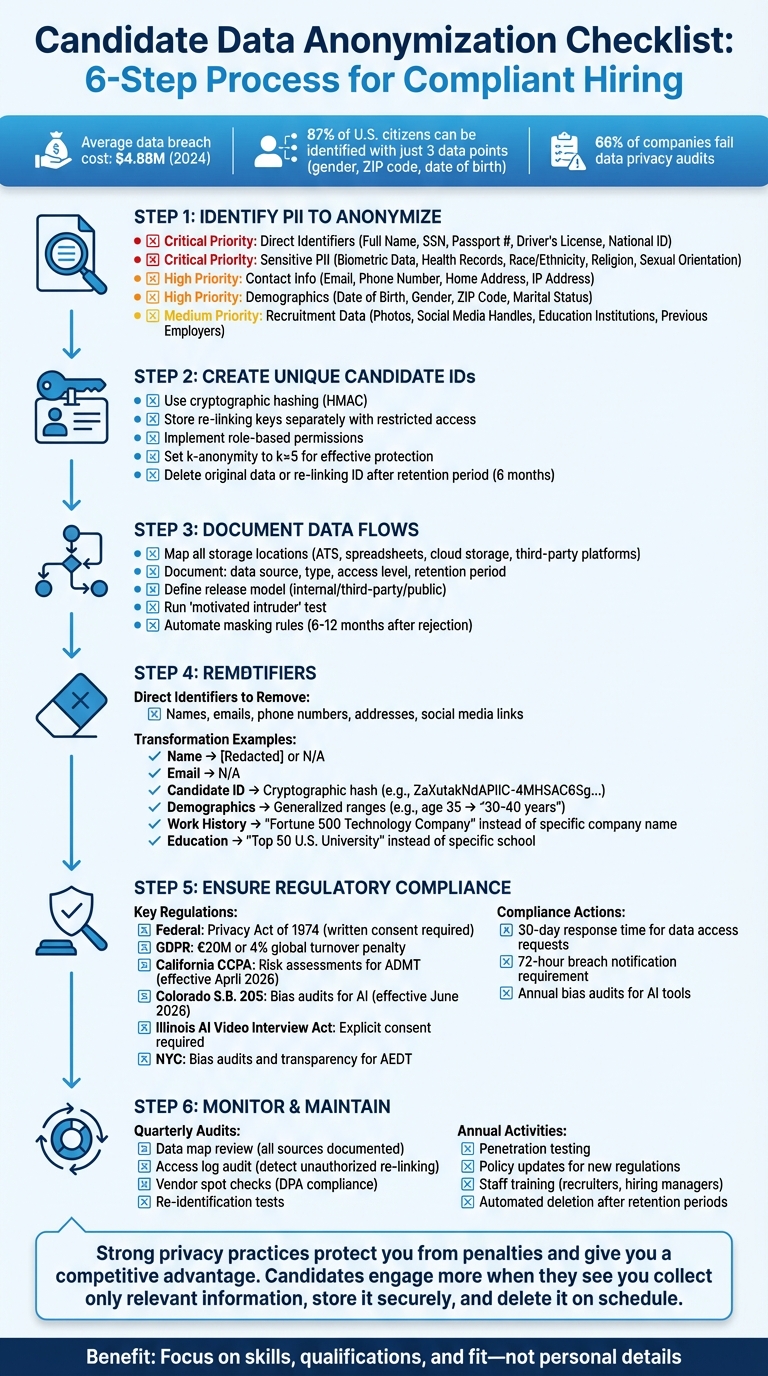

Candidate data anonymization ensures hiring decisions are based on qualifications, not personal details, while complying with privacy laws like GDPR and CCPA. By removing or masking identifiers such as names, emails, and demographic details, companies can reduce bias, protect privacy, and mitigate legal risks. Here’s what you need to know:

- Why it matters: Reduces unconscious bias, ensures compliance with regulations, and avoids costly data breaches (average cost: $4.88M in 2024).

- Preparation steps: Identify all PII (e.g., names, emails, IP addresses), assign unique candidate IDs, and map data flows.

- Key actions: Remove personal identifiers, anonymize demographics, generalize work/education details, and safeguard communication channels.

- Tools: Use automation (e.g., Skillfuel) for consistent anonymization, retention schedules, and compliance tracking.

- Legal compliance: Align with federal and state laws like CCPA, Colorado S.B. 205, and Illinois AI Video Interview Act.

- Ongoing maintenance: Conduct audits, update policies, and train staff regularly to stay aligned with evolving regulations.

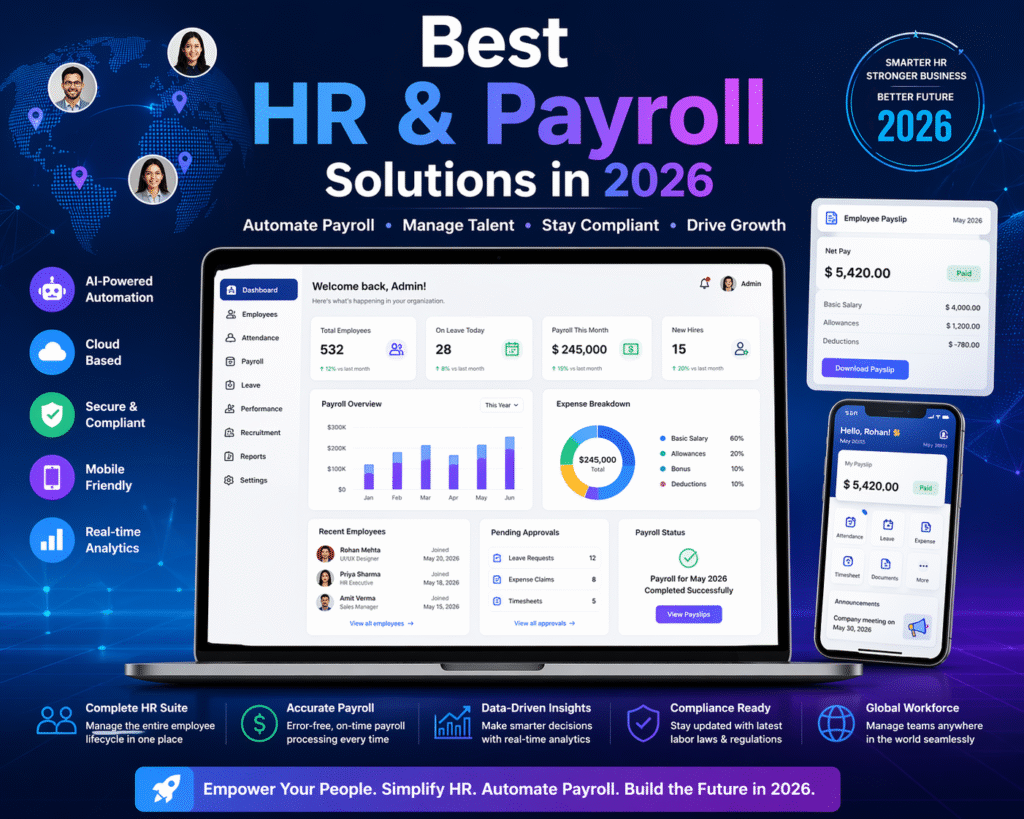

Complete Candidate Data Anonymization Workflow: 6-Step Compliance Checklist

How to integrate anonymised CVs into AI-driven workflows

Preparation Steps Before Anonymization

Before diving into anonymization, it’s essential to map out candidate data thoroughly. This ensures you don’t miss anything, over-redact information, or lose the ability to track records. Following a structured approach helps maintain consistency and compliance with legal standards.

Identify PII That Needs Anonymization

The first step is to identify all personally identifiable information (PII) that could reveal a candidate’s identity. This includes both direct identifiers – like names and government-issued IDs – and indirect identifiers, which can be combined to pinpoint someone. For example, research shows that 87% of U.S. citizens can be identified using just three data points: gender, ZIP code, and date of birth.

Pay close attention to sensitive PII that carries higher legal risks under regulations like GDPR. This includes details like race, ethnicity, religious beliefs, health records, and biometric data (e.g., fingerprints or facial recognition). In recruitment, even seemingly harmless data – like photos or social media handles – can introduce bias without being relevant to a candidate’s qualifications.

Don’t forget to review contact information such as email addresses, phone numbers, IP addresses, and physical addresses. Even employment and education histories can reveal someone’s identity if they include specific institutions or employers. While this information is vital for evaluating qualifications, anonymizing certain details (like school names) can reduce bias while still offering insights into skills and experience.

| PII Category | Specific Data Points to Identify | Priority Level |

|---|---|---|

| Direct Identifiers | Full Name, SSN, Passport #, Driver’s License, National ID | Critical |

| Sensitive PII | Biometric Data, Health Records, Race/Ethnicity, Religion, Sexual Orientation | Critical |

| Contact Info | Email, Phone Number, Home Address, IP Address | High |

| Demographics | Date of Birth, Gender, ZIP Code, Marital Status | High |

| Recruitment Data | Photos, Social Media Handles, Education Institutions, Previous Employers | Medium |

Once you’ve identified all relevant PII, the next step is to create secure, unique identifiers for tracking candidates.

Create Unique Candidate IDs

After pinpointing the data to anonymize, assign a unique candidate ID to each record in your applicant tracking system (ATS). This ID acts as a replacement for direct identifiers like names or Social Security numbers. A reliable way to generate these IDs is through cryptographic hashing methods, such as HMAC, which convert data into unreadable strings.

For example, a 2024 case study demonstrated that cryptographic hashing effectively prevented re-identification when k-anonymity reached k=5. To ensure security, store the key linking the unique ID to the original data in a separate lookup table with restricted access. Implement role-based permissions so data analysts can work with anonymized data without accessing the lookup table.

For unsuccessful candidates, delete either the original data or the re-linking ID after a defined retention period (e.g., six months). This final step ensures the anonymization process is complete. Tools like Skillfuel’s centralized dashboard can simplify assigning and managing these unique IDs while keeping your data consistent across the hiring workflow.

Document Data Flows and Set Goals

A critical part of preparation is mapping out where candidate data is stored. This includes local spreadsheets, shared drives, cloud storage, your ATS, and any third-party platforms. For each storage location, document the data source, type, access level, and retention period. This mapping helps identify where sensitive PII is exposed and where anonymization measures are necessary.

Next, define your "release model": Will the data be used internally, shared with third parties, or made public? Each of these scenarios has different risks regarding re-identification. To test your anonymization efforts, simulate an external re-identification attempt. This involves seeing if someone without prior knowledge but with access to public resources (like social media or public records) could identify a candidate from your anonymized data.

Finally, automate masking rules for different data types in your ATS. For instance, set triggers to anonymize data six to twelve months after a candidate is marked as "Rejected". Tailor these rules based on candidate categories, such as external applicants versus former employees, as legal requirements for retention and anonymization often vary.

Candidate Data Anonymization Checklist

After mapping your data flows and assigning unique IDs, the next step is executing the anonymization process. Here’s a detailed guide to removing identifying information while still keeping your hiring data useful for analysis and decision-making.

Remove Personal Identifiers

The first step is to strip out direct identifiers – the most obvious pieces of personal information. This includes full names, email addresses, phone numbers, physical addresses, and social media links (like LinkedIn profiles). Replace these with unique candidate IDs.

Next, eliminate indirect identifiers (or quasi-identifiers) that could lead to re-identification when combined with other data. Examples include graduation dates, specific job locations, or unique job titles.

Before diving in, clearly define your redaction scope. Decide which fields to remove – like photos, supervisor names, or references to specific projects – and apply these rules consistently across all candidate records. Don’t overlook internal notes, email threads, or file attachments, as these often contain hidden personal information.

When deciding between pseudonymization and full anonymization, consider your goals. Pseudonymization uses a key (like a cryptographic hash) to allow data re-linking if a candidate reapplies, while full anonymization is irreversible and offers stronger privacy safeguards.

| Field Name | Transformation Technique | Resulting Value Example |

|---|---|---|

| Name and Surname | Omit / Redact | N/A or [Redacted] |

| Email Address | Omit / Redact | N/A |

| Social Media Links | Omit | N/A |

| Candidate ID | Cryptographic Hashing | ZaXutakNdAPIIC-4MHSAC6Sg… |

| Phone Number | Omit / Redact | N/A |

Once personal identifiers are handled, focus on anonymizing demographic data to strengthen privacy while retaining analytic value.

Remove Demographic Information

Demographic data, like gender, age, race, ethnicity, marital status, and nationality, can unintentionally introduce bias into hiring decisions. Many modern systems can automatically mask these fields during the application review process. If you’re working manually, delete or mask these fields from resumes and forms.

However, completely removing demographic data may limit its usefulness for analyzing diversity and hiring trends. To balance privacy with data utility, use generalization (or "binning") to convert precise details into broader categories. For instance, replace specific ages with ranges like "30-40 years".

Another option is cryptographic hashing, where categorical fields like gender or ethnicity are replaced with hashed values (e.g., using HMAC). This allows analysis without exposing cleartext information. To further enhance privacy, apply k-anonymity scoring, ensuring that any combination of indirect identifiers matches at least a specified number (k) of other individuals in your dataset. Studies suggest setting k-anonymity to 5 for effective protection.

Finally, test your anonymization efforts with the "motivated intruder" test. This evaluates whether someone with access to public records (like LinkedIn or social media) could identify a candidate from your anonymized data.

Anonymize Work History and Education

Work history and education often require special attention. These details are critical for assessing qualifications but can also reveal identities if they include specific company or school names and unique job titles. To preserve relevance while protecting privacy:

- Replace company names with general descriptors like "Fortune 500 Technology Company" or "Mid-Size Healthcare Provider."

- Swap out school names for categories like "Top 50 U.S. University" or "State University."

- Generalize graduation years and employment dates into ranges, e.g., "Graduated 2015–2020" instead of "Graduated in 2018."

- Simplify job titles, such as replacing "Senior DevOps Engineer at Amazon Web Services" with "Senior Engineering Role at Large Tech Company."

These steps maintain the context of a candidate’s background without compromising their anonymity.

Protect Communication Channels

Candidate communications often contain sensitive details, such as email addresses, phone numbers, and notes from interviews. To safeguard this information:

- Route communications through a generic email address (e.g., recruiting@company.com) instead of personal accounts. This limits the spread of candidates’ contact details.

- Use automated scheduling tools to send interview invitations without exposing candidates’ email addresses.

- Establish guidelines for internal notes and feedback forms. Avoid mentioning candidates by name or including unnecessary personal details. Use candidate IDs instead.

- Review third-party tools (e.g., video interview platforms) to ensure they follow the same anonymization standards. Confirm that these tools anonymize or delete stored data in line with your retention policies.

Use Automation Tools for Anonymization

Manual anonymization can work for small-scale operations but becomes impractical with large volumes of data. Automation tools built into modern applicant tracking systems can simplify and standardize the process.

Platforms like Skillfuel offer centralized dashboards to manage unique candidate IDs and maintain data consistency. These tools can also enforce data retention rules, automatically anonymizing data after specific triggers, such as candidate rejection or inactivity.

AI-powered resume redaction is another useful feature. Advanced systems use machine learning to blur sensitive details – like names, photos, and social media links – during the initial review stages. This reduces unconscious bias while allowing recruiters to access full profiles later.

For bulk anonymization, some platforms support batch processing, anonymizing up to 1,000 profiles at once via CSV uploads or scheduled jobs. Others include a "Mass Anonymize" feature to replace identifying details with placeholders like asterisks or random strings.

When setting up automated anonymization, ensure consistent masking rules for all data types. For example, replace all numbers with "0000" or dates with "1900-01-01" to retain database structure without revealing real data. Verify that the tool also scrubs associated records, such as timesheets, placements, and email logs, to eliminate residual personal information.

sbb-itb-e5b9d13

Meeting Regulatory Requirements

Once anonymization processes are in place, aligning them with legal requirements becomes essential. Anonymizing candidate data isn’t just a best practice – it’s a legal obligation under federal and state laws. HR teams must ensure their workflows comply with privacy, anti-discrimination, and automated decision-making regulations. Failing to meet these standards can result in steep penalties, such as fines of up to €20 million or 4% of global turnover under GDPR, or up to $5,000 for misdemeanor violations under the Privacy Act.

Regulations That Apply to Candidate Data

On the federal level, the Privacy Act of 1974 governs how recruitment records are handled. It mandates written consent before disclosing personal data and gives candidates the right to access and correct their information. Additionally, the Equal Employment Opportunity Commission (EEOC) enforces anti-discrimination laws, including Title VII of the Civil Rights Act, the Americans with Disabilities Act (ADA), the Genetic Information Nondiscrimination Act (GINA), and the Age Discrimination in Employment Act (ADEA). These laws require employers to maintain records that demonstrate fair hiring practices.

State-specific laws add more complexity. For example:

- California’s CCPA (effective April 2026) mandates risk assessments and pre-use notices for automated decision-making technology (ADMT).

- Colorado’s S.B. 205 (effective June 2026) requires bias audits for high-risk AI systems used in hiring.

- Illinois’ AI Video Interview Act demands explicit consent and clear data retention policies when AI is used for video interview analysis.

- New York City enforces bias audits and transparency for automated employment decision tools (AEDT).

"An employer’s use of automated decision systems may violate the Fair Employment and Housing Act (FEHA) if it results in discrimination on the basis of a protected characteristic." – California Office of Administrative Law

| Jurisdiction | Regulation/Law | Key Requirement for Candidate Data |

|---|---|---|

| Federal | Privacy Act of 1974 | Restricts record disclosure without consent; allows data access/correction |

| California | CCPA (11 CCR § 7120) | Risk assessments and opt-out rights for ADMT |

| Colorado | S.B. 205 | Bias audits for high-risk AI systems (effective June 2026) |

| Illinois | AI Video Interview Act | Consent and retention policies for AI video analysis |

| New York City | Admin. Code § 20-871 | Mandatory bias audits and transparency for AEDT systems |

To comply, employers should conduct annual bias audits for AI tools in jurisdictions like NYC and Colorado. In California, pre-use notices must be issued before deploying automated decision-making technology. Illinois requires clear data retention timelines for AI-analyzed video interviews. When candidates request access to their records, verifying their identity – via a driver’s license or notarized statement – is crucial to avoid unauthorized disclosures. These regulatory demands reinforce the need for robust anonymization practices.

How Skillfuel Supports Compliance

Navigating these regulatory challenges requires tools that streamline and document compliance. Skillfuel offers features tailored to meet GDPR, CCPA, and other privacy standards, helping organizations avoid the legal and reputational risks tied to poor anonymization.

For example, when candidates exercise their "Right to Erasure" under GDPR, Skillfuel provides a one-click anonymization option for individual profiles. Organizations typically have 30 days to fulfill such requests, and Skillfuel’s centralized dashboard ensures deadlines are met. The system removes all Personally Identifiable Information (PII) while retaining non-identifiable data for analytics and reporting.

"The anonymization of data deletes all the PII (Personally Identifiable Information) of the respective candidates. The remaining data is kept intact to ensure that the remaining data can be used for reports." – Freshteam

Skillfuel also logs the legal basis for processing data – such as Legitimate Interest or Consent – ensuring compliance with GDPR Article 6. Its GDPR-compliant storage and security features safeguard candidate data, reducing the risk of breaches, which must be reported to authorities within 72 hours of discovery.

Monitoring and Maintaining Anonymization Practices

Keeping candidate data anonymized isn’t a one-and-done task. As regulations and workflows shift, HR teams need to treat anonymization as an ongoing process. Regular testing, updates, and monitoring ensure compliance stays intact, even as legal and organizational needs evolve.

Perform Regular Audits

After aligning with regulations, the next step is consistent monitoring. Schedule quarterly audits to review all data sources – your ATS, shared drives, spreadsheets, and third-party tools – to confirm they’re documented and secured. These audits help uncover any untracked copies of candidate data that might bypass anonymization protocols.

Another key practice is running re-identification tests. Using a statistical framework, these tests measure the effectiveness of anonymization and highlight areas where methods might need improvement. Quarterly access log audits are also critical. They help detect unauthorized attempts to re-link pseudonymized data. Keep detailed logs that track who anonymized the data and who accessed any information that could reverse that process. Investigate unusual patterns immediately to prevent potential breaches.

| Audit Activity | Frequency | Objective |

|---|---|---|

| Data Map Review | Quarterly | Ensure all new data sources and types are documented. |

| Access Log Audit | Quarterly | Detect unauthorized access or data re-linking. |

| Bias Audit | Regular Intervals | Verify AI tools aren’t introducing discriminatory patterns. |

| Vendor Spot Checks | Quarterly/Annual | Confirm sub-processors and data center compliance. |

| Penetration Testing | Annual | Test encryption strength and security measures. |

Third-party vendors shouldn’t be overlooked, either. Conduct annual audits or spot checks to confirm they follow Data Processing Agreements (DPAs) and maintain up-to-date security certifications. Check their sub-processor lists and data center locations, as changes in their infrastructure could create new risks. Additionally, annual penetration tests help ensure encryption and access controls can handle emerging threats.

Update Policies and Train Staff

Regulations are constantly evolving. For example, Colorado’s SB 205, effective June 30, 2026, will require bias audits for high-risk AI hiring systems, while California’s CCPA amendments, starting April 1, 2026, mandate detailed risk assessments for automated decision-making tools. Assign a Data Protection Officer (DPO) or team member to track these changes and update anonymization policies as needed. Joining professional networks can also provide timely updates, such as insights on the UK’s Data (Use and Access) Act, set to take effect on June 19, 2025.

Training is equally critical. Tailor sessions to specific roles:

- Recruiters should learn about data minimization practices and the lawful bases for processing data, like consent versus legitimate interest.

- Hiring managers need to understand the risks of storing interview notes in unsecured locations, like shared drives.

Training should also include algorithmic literacy. Employees need to grasp how AI-driven anonymization tools work, their limitations, and the reasoning behind their outputs. This helps prevent automation bias, where staff might blindly trust AI recommendations without questioning them.

The importance of training can’t be overstated. A recent statistic shows that 66% of companies undergoing data privacy audits in the past three years failed at least once. Another striking fact: 87% of U.S. citizens can be identified using just three pieces of indirect information – gender, ZIP code, and date of birth. Employees must understand that context determines whether data is sensitive. For instance, a list of names alone isn’t sensitive, but a list of names tied to a medical clinic is.

Finally, configure ATS systems to automatically anonymize or delete candidate data after retention periods (typically 6–12 months for unsuccessful applicants). Set reminders to request renewed consent 30 days before retention periods end. When data is deleted, retain a metadata stub – this keeps a record of the candidate ID, data type, and deletion date, proving compliance without storing personal information. By combining regular audits, policy updates, and training, your anonymization practices will stay aligned with both legal requirements and technological advancements.

Conclusion

Anonymizing candidate data creates a hiring process that’s fairer and more defensible. By removing names, photos, addresses, and other demographic details, your team can focus on what truly matters: skills, qualifications, and fit. Research has shown that concealing candidate identifiers can significantly reduce hiring bias. Plus, it helps protect your organization from hefty GDPR penalties.

The checklist provided – covering steps like identifying personal information, creating unique candidate IDs, automating retention schedules, and conducting regular audits – serves as a guide for scaling anonymization. Manual methods often fall short when applied at scale. That’s where tools like Skillfuel step in. By automating the removal of high-bias fields, managing consent timestamps, and maintaining audit trails, Skillfuel not only reduces administrative burdens but also supports "data protection by design" standards.

This approach highlights the practical benefits of strong privacy practices.

"Strong privacy practices protect you from penalties and give you a competitive advantage. Candidates engage more when they see you collect only relevant information, store it securely, and delete it on schedule." – Alex.com

Anonymization also ensures you can still leverage data effectively. You’ll be able to run diversity reports and recruitment analytics using aggregate, non-identifiable data. This means you’re not losing valuable insights – you’re building trust with candidates and enhancing your employer brand.

Think of this checklist as a flexible framework. With the right tools, ongoing audits, and continuous training, you can create a hiring process that’s both compliant and genuinely equitable.

FAQs

How does anonymizing candidate data help reduce bias in hiring?

Anonymizing candidate data is a practical way to reduce bias in hiring. By removing personal details like names, hometowns, or demographic indicators, hiring managers are encouraged to focus purely on a candidate’s skills, qualifications, and experience. This approach helps ensure evaluations are based on merit rather than subjective factors.

Studies indicate that stripping applications of details such as gender, age, or ethnicity can significantly limit unconscious bias. This not only levels the playing field but also supports efforts to create a more diverse and inclusive workforce. By prioritizing qualifications over personal identifiers, companies can make strides toward fairer hiring practices.

What steps should HR teams take to anonymize candidate data while staying compliant with privacy laws?

To protect candidate privacy and align with privacy laws, the process starts with assessing the risk of re-identification. This means evaluating how easily someone could match anonymized data back to an individual. A practical approach is pseudonymization, where personal identifiers are replaced with pseudonyms. This method keeps the data useful while significantly lowering the risk of exposing someone’s identity.

Once data is anonymized, it’s crucial to store it securely. Use safeguards like encryption, role-based access controls, and regular audits to ensure only authorized personnel can access the information. These measures act as a strong defense against breaches or unauthorized use.

Additionally, following principles like data minimization – only collecting what’s necessary – and transparency about how data is handled ensures compliance with privacy regulations such as GDPR and CCPA. These steps not only protect candidates but also reduce the risk of legal and ethical issues.

By adopting these methods, HR teams can effectively protect sensitive information while maintaining trust and compliance throughout the hiring process.

Why is regular maintenance important for anonymizing candidate data effectively?

Regular maintenance plays a key role in ensuring candidate data anonymization stays accurate, compliant, and free from bias throughout the hiring process.

By consistently reviewing and fine-tuning anonymization methods, HR teams can minimize the chances of unconscious bias, adapt to changing data privacy regulations, and safeguard sensitive information. This ongoing effort not only protects candidates’ privacy but also builds trust and promotes fairness, all while keeping the recruitment process efficient.