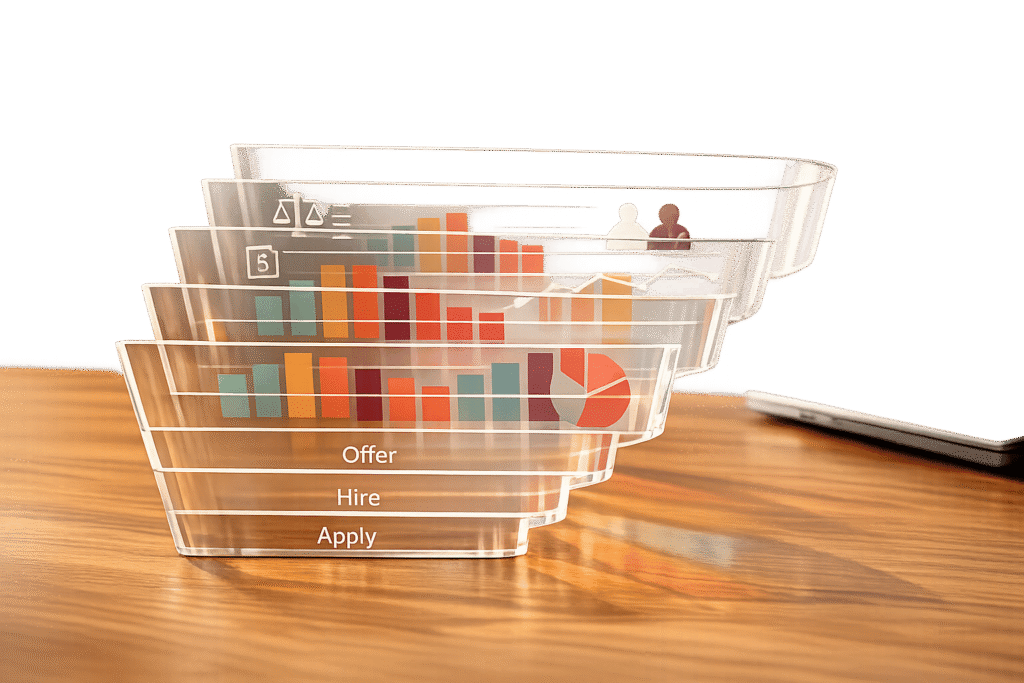

Hiring feels like guesswork when evaluations lack structure. Role-specific rubrics solve this by offering clear, tailored scoring frameworks that define success for each job. Research shows they improve hiring accuracy by 34% and make structured interviews 3x more predictive of job performance than unstructured ones. By focusing on job-specific skills and behaviors, rubrics reduce bias, ensure consistent evaluations, and help teams make better hiring decisions.

Key Takeaways:

- What They Are: Rubrics outline clear benchmarks for evaluating job-specific skills and behaviors.

- Why They Work: They reduce subjectivity, improve accuracy, and cut bias in hiring.

- Proven Impact: Studies show higher-quality hires, better diversity outcomes, and more consistent evaluations.

This approach transforms hiring into a data-driven process, ensuring fairer, more reliable decisions.

Crafting Interview Rubrics

Research Evidence on Improved Accuracy

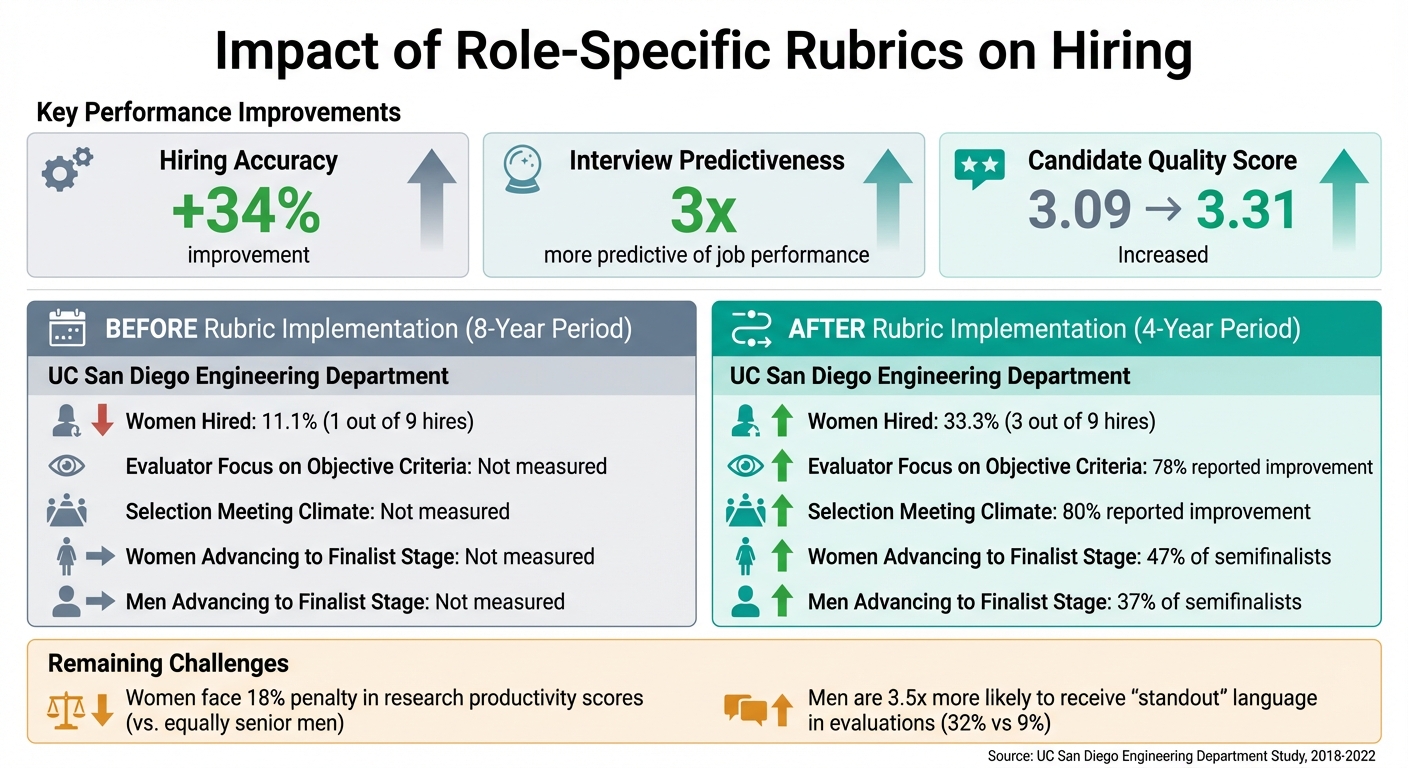

Impact of Role-Specific Rubrics on Hiring Outcomes: Before vs After Implementation

Empirical studies underline the importance of role-specific rubrics in improving both accuracy and fairness during evaluations.

Studies Showing Increased Hiring Accuracy

Research indicates that using structured rubrics in evaluations can enhance hiring accuracy by 34%. This improvement helps differentiate consistently strong hires from critical missteps.

Rubrics also reshape how evaluators assess candidates. In faculty hiring, 78% of evaluators reported shifting toward objective criteria, while 80% noted a better meeting climate when rubrics were used. These changes lead to more consistent and defensible hiring decisions.

"Rubrics are generally better than no rubrics if they prompt evaluators to slow down and more deliberately consider how well each candidate actually fulfills the previously agreed-upon criteria for the position."

- Mary Blair-Loy, Professor of Sociology, University of California, San Diego

Another study tracked candidate quality scores before and after rubrics were introduced, showing an increase from 3.09 to 3.31 on a validated scale.

Bias Reduction Data

Rubrics don’t just improve accuracy – they also help reduce bias in evaluations.

Between 2018 and 2022, an engineering department at a research-intensive university implemented a six-dimension hiring rubric to evaluate 62 semifinalists (32 women and 30 men). Before rubrics, the department hired only 11% women over eight years. After implementing rubrics, female hires jumped to 33%, with women making up 47% of semifinalists, compared to 37% for men.

Rubrics also address specific bias patterns. For example, women in technical fields often face an 18% penalty in research productivity scores compared to equally senior men – a gap that widens to 35% at lower productivity levels.

| Metric | Before Rubric Implementation (8-Year Period) | After Rubric Implementation (4-Year Period) |

|---|---|---|

| Proportion of Women Hired | 11.1% (1 out of 9 hires) | 33.3% (3 out of 9 hires) |

| Evaluator Focus on Objective Criteria | Not measured | 78% reported improvement |

| Selection Meeting Climate | Not measured | 80% reported improvement |

| Women Advancing to Finalist Stage | Not measured | 47% of semifinalists |

| Men Advancing to Finalist Stage | Not measured | 37% of semifinalists |

Yet, rubrics are not flawless. As Professor Mary Blair-Loy points out, "Individual evaluator bias can get smuggled into evaluations in this seemingly objective process… rubrics are not a panacea". For example, even with rubrics, men are 3.5 times more likely to receive "standout" language in evaluations compared to women. This highlights the need for pairing rubrics with evaluator training and ongoing oversight to ensure their proper use.

Key Benefits of Role-Specific Rubrics

Role-specific rubrics bring structure and clarity to candidate evaluation, building on the proven improvements in hiring accuracy and reducing bias. They transform the process into one that’s evidence-based and focused on measurable skills.

Standardized Criteria Reduce Subjectivity

Using rubrics eliminates ambiguous terms like "team player" or "culture fit" and replaces them with clear, observable behaviors. Instead of relying on gut instincts, interviewers are guided to provide specific evidence, such as "identifies root causes and suggests multiple solutions under pressure". This approach shifts evaluations from vague impressions like "seemed confident" to detailed observations like "calmly coordinated with ops during a system outage".

The trend toward skills-based hiring is growing, with 64.8% of employers now using this approach for entry-level roles, and 73% adopting competency-based job descriptions to support it. By tying every rubric criterion directly to the job description, evaluators stay focused on essential skills rather than subjective preferences or unrelated qualities.

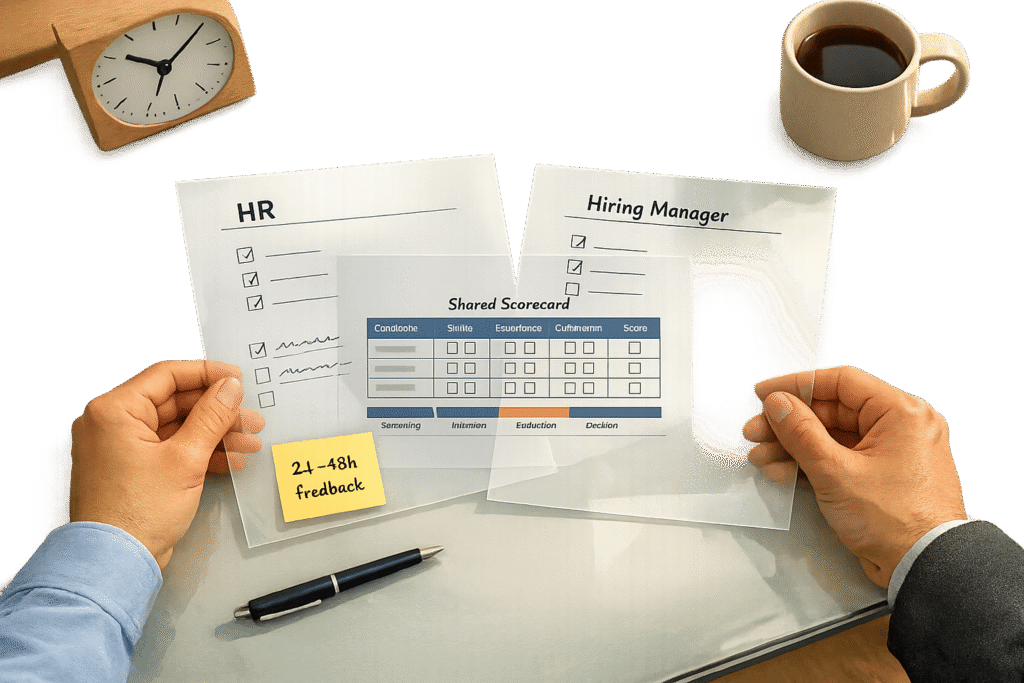

Consistency Across Evaluators

Rubrics also tackle the challenge of inconsistent evaluations by creating a shared framework for assessing candidates. Whether it’s recruiters, hiring managers, or peer interviewers, everyone uses the same behavioral anchors to define excellence. This eliminates the variability that often arises when different interviewers interpret the same performance in different ways.

Calibration workshops further strengthen this consistency. In these sessions, interviewers independently score a sample response, then compare and discuss their ratings to align their interpretations. This process ensures inter-rater reliability, meaning the candidate’s score reflects their actual performance, not the perspective of a particular interviewer. Danielle Harders, Director of Global Business Recruiting at Brex, highlights the impact of this approach:

"Having these structured rubrics has helped interviewers feel a lot more confident in their decision making. With the platform, we can move from gut feel to concrete data".

This consistency helps ensure fairer, more reliable evaluations.

Promoting Equal Assessments

Rubrics help level the playing field by holding all candidates to the same evaluation standards. Evidence-based scoring – where every competency is assigned a numerical score supported by specific examples – prevents decisions from being influenced by the most vocal team member or by vague impressions.

sbb-itb-e5b9d13

Best Practices for Developing Role-Specific Rubrics

Start by defining what success looks like for the role. Identify 2–3 key deliverables the new hire should achieve, like reducing page load times by 25% or generating $500,000 in sales. Then, determine 4–7 core competencies essential for meeting those goals – these might include technical expertise, problem-solving skills, or relevant domain knowledge. Keeping the list concise (no more than 5–7 competencies) helps evaluators stay focused and prevents them from feeling overwhelmed. These steps build on earlier discussions about standardized evaluations, enhancing both fairness and precision.

Defining Role-Specific Criteria

Once you’ve outlined the competencies, assign weights to reflect their importance. You can use percentages that total 100% or a 1–5 importance scale. This ensures that strengths in less critical areas don’t overshadow weaknesses in key ones. For example, if system design is twice as important as communication for a backend engineer, the rubric should reflect that priority. Structured interviews with rubrics have been shown to double their predictive accuracy, making this step crucial for aligning evaluations with job success.

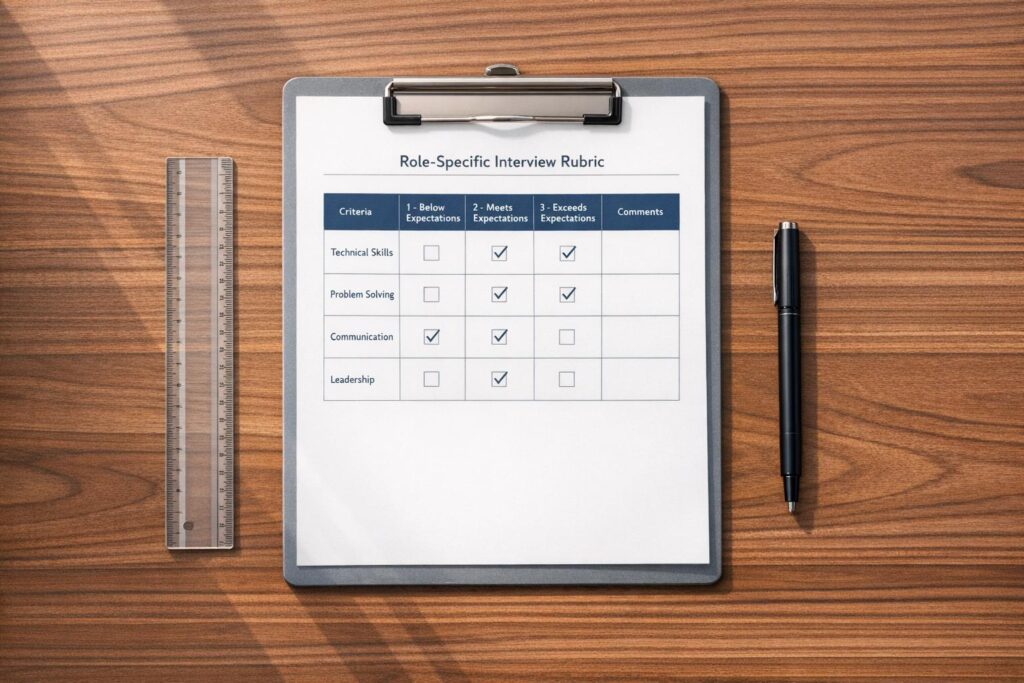

Using Clear Scoring Scales with Behavioral Anchors

A 1–5 rating scale works best when tied to specific, observable behaviors. Avoid vague descriptions like "good communicator" and instead define what each score means in practice. For instance, in problem-solving, a "1" might mean "requires heavy prompting and fails to identify the root cause", while a "5" could mean "quickly identifies root cause and proposes a solution with a testing plan and contingencies". This approach ensures evaluations are based on actual behaviors rather than gut feelings. To make the process even more effective, link each interview question to a specific competency so you’re gathering the right evidence.

Resolving Rater Discrepancies

Even with clear guidelines, evaluators may sometimes disagree. To address this, hold calibration sessions where the team reviews and rates a recorded or mock interview independently, then discusses their differences using the behavioral anchors as a guide. Monthly calibration sessions can help maintain consistency. Require evaluators to back up their scores with at least two lines of factual evidence – such as "Candidate explained caching tradeoffs using latency calculations" – to ensure scores are auditable and defensible during debriefs. Track inter-rater reliability over time to identify evaluators who might need additional training. Finally, establish clear decision thresholds, like requiring a weighted score of 70% or higher with no competency rating below 2, to determine whether to extend an offer.

Case Studies and Real-World Outcomes

Impact on Faculty and Technical Hiring

From 2018 to 2022, the engineering department at the University of California, San Diego, used a six-dimension rubric adapted from the University of Michigan‘s STRIDE program to evaluate 62 semifinalists. These dimensions guided the hiring process, and the results were striking. Professor Mary Blair-Loy’s analysis revealed a shift: during the rubric phase, 3 out of 9 hires (33%) were women, compared to just 1 out of 9 hires (11%) in the prior eight years. Additionally, 78% of faculty members noted that rubric summaries helped them focus on objective criteria during hiring discussions, while 80% reported an improved atmosphere in these meetings.

"The phase with the increased hiring of women coincides with the period when rubrics were used."

- Mary Blair-Loy, Professor of Sociology, University of California, San Diego

The University of California also created tailored rubrics for Professor of Teaching positions under an NSF AGEP grant. Similarly, Cockroach Labs introduced a five-category rubric for engineering roles, measuring technical excellence, communication, critical thinking, culture add, and potential. By removing vague "culture fit" criteria, they aimed to reduce affinity bias.

Measurable Improvements in Diversity and Efficiency

These examples show how role-specific rubrics can improve hiring practices and outcomes. At UC San Diego, 47% of female semifinalists advanced to the next stage compared to 37% of male semifinalists when rubrics were used. This indicates that rubrics can help counteract bias in finalist selection. However, challenges remain. Female candidates experienced an average 18% lower research productivity score than men with the same seniority and publication records. Additionally, men were 3.5 times more likely to receive "standout" language in written rubric comments (32% vs. 9%).

Faculty evaluators found that rubrics made their discussions more structured and grounded in evidence, reducing reliance on personal biases or anecdotal impressions. These outcomes highlight the importance of structured rubrics in promoting fair and accurate hiring decisions. However, they also underscore the need for ongoing efforts, such as calibration exercises and addressing lingering biases, to fully realize their potential.

Conclusion

Role-specific rubrics transform hiring by replacing subjective opinions with clear, evidence-based evaluations. Research highlights that structured interviews using these rubrics are over three times more predictive of job performance and can improve hiring accuracy by 34%. They also help reduce bias and create a shared standard for excellence among evaluators.

This shift underscores the importance of moving away from instinct-driven decisions. Companies like Brex and EvenUp have embraced data-driven evaluations, revealing and addressing blind spots in their hiring processes.

For HR professionals and talent leaders, the next steps are straightforward: establish behavioral benchmarks, assign weights to key competencies, and hold calibration sessions to ensure consistency. Integrating these rubrics into your applicant tracking system makes them a natural part of your hiring workflow.

As the focus on skills-based hiring continues to grow, structured rubrics pave the way for quicker, fairer, and more defensible hiring decisions, improving both candidate quality and diversity.

FAQs

How do I choose the right competencies for a role-specific rubric?

To build a role-specific rubric, start by identifying the core outcomes and skills that are crucial for the job. Aim to focus on 4–6 measurable and observable competencies – things like problem-solving, communication, or teamwork. For each competency, create behaviorally-anchored descriptions that clearly outline what performance looks like at different levels.

Next, align your interview questions with these competencies to maintain consistency throughout the hiring process. Finally, make it a habit to review and update the rubric regularly. Collaborate with hiring teams to refine the competencies and ensure they’re effective at predicting success in the role.

What’s the best way to weight rubric criteria without overcomplicating scoring?

When evaluating performance, assign simple numeric ratings (like 1-5) to each criterion. Ensure the weights for all criteria add up to 100%. Focus on 4-6 key competencies that are directly tied to the role’s critical outcomes. Use clear, behaviorally anchored performance levels to make expectations transparent and measurable.

To maintain consistency and fairness, conduct regular calibration sessions. These sessions align evaluators on standards and expectations. Additionally, validate outcomes periodically to ensure the process stays reliable and manageable.

How do we keep interviewers scoring consistently and reduce rater bias?

Structured rubrics tailored to specific roles play a key role in maintaining consistent scoring and minimizing rater bias. By offering clear, measurable criteria, these rubrics shift the focus to observable behaviors instead of subjective opinions. They help standardize evaluations across different interviewers, ensuring a more uniform assessment process.

Additionally, regular calibration sessions help interviewers align their scoring standards, keeping evaluations consistent. Tools like numerical scoring systems and behaviorally anchored rating scales further enhance fairness and reliability, making the evaluation process more dependable.